Benchmarking DuckDB From Java: Fast INSERT, UPDATE, and DELETE

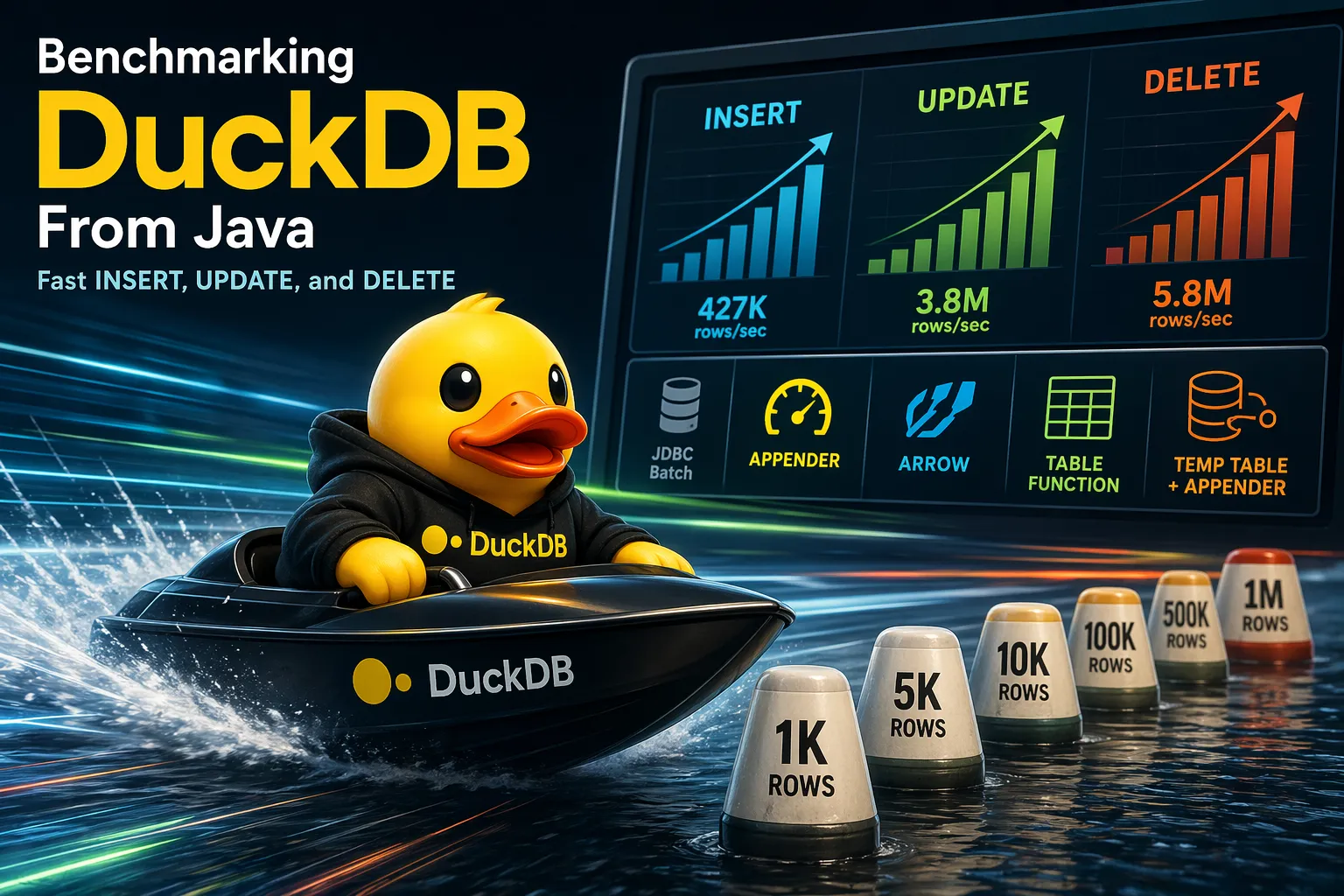

DuckDB’s Java documentation recommends the Appender as the efficient way to insert data. But two questions come up in practice: how do you efficiently UPDATE and DELETE? And is the Appender really the fastest option for INSERT, or are there faster alternatives?

We benchmarked 7 different methods — from naive row-by-row JDBC to the Appender, Arrow streams, and DuckDB’s Java table function API — across INSERT, UPDATE, and DELETE, scaling from 1K to 1M rows.

The Setup

Section titled “The Setup”We used a sensor-readings table with 9 columns covering DuckDB’s core types: UUID, TIMESTAMPTZ, DOUBLE, DECIMAL(10,2), SMALLINT, BOOLEAN, VARCHAR, and VARCHAR[] (same schema as our PostgreSQL benchmark).

Each method was warmed up, then measured multiple times (median taken). After every operation, we verified correctness: row counts, spot-checked values, and for UPDATE specifically verified the target column was actually modified. DuckDB runs on disk with a single connection.

All benchmark code is open source: examples/java-duckdb-benchmark.

INSERT Methods

Section titled “INSERT Methods”We tested 6 insert methods:

1. Individual INSERT — One executeUpdate() per row in a transaction. Simple but slow: ~3.3K rows/sec at all sizes. (code)

2. JDBC Batch — Standard JDBC addBatch() / executeBatch() via the DuckDB JDBC driver. On PostgreSQL this is a solid optimization; on DuckDB it barely helps — ~5K rows/sec. (code)

3. Multi-value INSERT — Build INSERT INTO t VALUES (...),(...),(...) ... with up to 10K rows per statement. ~14K rows/sec. (code)

4. Appender — DuckDB’s recommended bulk insert API. Bypasses the SQL parser — you call beginRow(), append() for each column, and endRow(): (code)

try (var appender = ((DuckDBConnection) conn) .createAppender(DuckDBConnection.DEFAULT_SCHEMA, "sensor_readings")) { for (var r : rows) { appender.beginRow(); appender.append(r.deviceId()); appender.append(r.timestamp()); appender.append(r.temperature()); // ... remaining columns appender.endRow(); }}5. Arrow Stream — Build Apache Arrow columnar vectors in Java, register them with DuckDB via registerArrowStream, then INSERT INTO ... SELECT FROM stream. Since Arrow vectors must be fully built before registering, the data is processed in chunks of 10K rows — each chunk requires a separate INSERT ... SELECT statement. (code)

6. Table Function (UDF) — Register a Java table function via DuckDB’s DuckDBFunctions.tableFunction() API. DuckDB pulls rows directly from a Java Iterator in chunks of 2,048. Unlike Arrow, this executes a single SQL statement regardless of data size — DuckDB calls the apply() callback repeatedly until the iterator is exhausted: (code)

DuckDBFunctions.tableFunction() .withName("_bench_insert_rows") .withFunction(new DuckDBTableFunction<>() { public long apply(DuckDBTableFunctionCallInfo info, DuckDBDataChunkWriter output) { // Fill columnar vectors, up to output.capacity() rows // DuckDB calls this repeatedly until we return 0 } }) .register(conn);

// Then: INSERT INTO sensor_readings SELECT ... FROM _bench_insert_rows()UPDATE and DELETE Methods

Section titled “UPDATE and DELETE Methods”For UPDATE and DELETE, Individual and JDBC Batch work the same way as for INSERT — one statement per row or batch. (update code, delete code)

The interesting methods are the set-based approaches:

UNNEST list params — Pass all keys as parallel array parameters: (code)

DELETE FROM sensor_readingsWHERE (device_id, timestamp) IN ( SELECT UNNEST(?::UUID[]), UNNEST(?::TIMESTAMPTZ[]));Arrow stream join — Stage keys as Arrow vectors, then join against the main table. Same registerArrowStream technique but with only the key columns. (update code, delete code)

DELETE FROM sensor_readings sUSING arrow_stream kWHERE s.device_id = k.device_id::UUID AND s.timestamp = k.timestampTemp table + Appender — Create a temp table, bulk-load keys with the Appender, then execute a set-based join. This turns DuckDB’s INSERT-only Appender into a tool for bulk UPDATE and DELETE: (update code, delete code)

// 1. Create staging tablestmt.execute("CREATE TEMP TABLE _keys (device_id UUID, timestamp TIMESTAMPTZ)");

// 2. Bulk-load keys via Appendertry (var appender = ((DuckDBConnection) conn) .createAppender(DuckDBConnection.DEFAULT_SCHEMA, "_keys")) { for (var r : rows) { appender.beginRow(); appender.append(r.deviceId()); appender.append(r.timestamp()); appender.endRow(); }}

// 3. Set-based deletestmt.execute(""" DELETE FROM sensor_readings s USING _keys k WHERE s.device_id = k.device_id AND s.timestamp = k.timestamp""");stmt.execute("DROP TABLE _keys");The same pattern works for UPDATE — add the new values to the staging table:

CREATE TEMP TABLE _update_keys (device_id UUID, timestamp TIMESTAMPTZ, temperature DOUBLE);-- Appender-load keys + new valuesUPDATE sensor_readings sSET temperature = k.temperatureFROM _update_keys kWHERE s.device_id = k.device_id AND s.timestamp = k.timestamp;Table function join — Same as the insert table function, but DuckDB uses it as a key source in a DELETE ... USING or UPDATE ... FROM join. (update code, delete code)

The Results

Section titled “The Results”DuckDB Insert / Update / Delete Benchmark

Comparing JDBC :batch, appender, Arrow, UNNEST list params, and temp-table join

INSERT: Throughput vs Total Rows

UPDATE: Throughput vs Total Rows

DELETE: Throughput vs Total Rows

INSERT results

1,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual INSERT | 345.9 | 2,891 | 29.3x slower |

| Batch (:batch) | 216.8 | 4,613 | 18.3x slower |

| Multi-value INSERT | 53.8 | 18,582 | 4.6x slower |

| Appender (:appender) | 11.8 | 84,630 | fastest |

| Arrow stream | 19.2 | 51,977 | 1.6x slower |

| Table function | 13.6 | 73,622 | 1.1x slower |

5,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual INSERT | 1,582.3 | 3,160 | 64.4x slower |

| Batch (:batch) | 1,009.2 | 4,954 | 41.1x slower |

| Multi-value INSERT | 334.5 | 14,948 | 13.6x slower |

| Appender (:appender) | 35.0 | 142,985 | 1.4x slower |

| Arrow stream | 26.1 | 191,677 | 1.1x slower |

| Table function | 24.6 | 203,537 | fastest |

10,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual INSERT | 3,016.6 | 3,315 | 111.4x slower |

| Batch (:batch) | 1,950.2 | 5,128 | 72.0x slower |

| Multi-value INSERT | 750.5 | 13,325 | 27.7x slower |

| Appender (:appender) | 62.3 | 160,472 | 2.3x slower |

| Arrow stream | 27.1 | 369,291 | fastest |

| Table function | 39.2 | 254,808 | 1.4x slower |

50,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual INSERT | 15,062.9 | 3,319 | 106.5x slower |

| Batch (:batch) | 9,805.0 | 5,099 | 69.3x slower |

| Multi-value INSERT | 3,722.1 | 13,433 | 26.3x slower |

| Appender (:appender) | 347.8 | 143,751 | 2.5x slower |

| Arrow stream | 188.1 | 265,791 | 1.3x slower |

| Table function | 141.5 | 353,377 | fastest |

100,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual INSERT | 30,299.7 | 3,300 | 128.7x slower |

| Batch (:batch) | 19,854.5 | 5,037 | 84.3x slower |

| Multi-value INSERT | 7,300.8 | 13,697 | 31.0x slower |

| Appender (:appender) | 629.1 | 158,945 | 2.7x slower |

| Arrow stream | 235.4 | 424,734 | fastest |

| Table function | 250.2 | 399,683 | 1.1x slower |

500,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual INSERT | - | - | skipped |

| Batch (:batch) | - | - | skipped |

| Multi-value INSERT | - | - | skipped |

| Appender (:appender) | 3,120.3 | 160,243 | 2.6x slower |

| Arrow stream | 1,288.7 | 387,994 | 1.1x slower |

| Table function | 1,179.9 | 423,747 | fastest |

1,000,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual INSERT | - | - | skipped |

| Batch (:batch) | - | - | skipped |

| Multi-value INSERT | - | - | skipped |

| Appender (:appender) | 6,544.0 | 152,812 | 2.5x slower |

| Arrow stream | 2,922.8 | 342,139 | 1.1x slower |

| Table function | 2,608.4 | 383,377 | fastest |

UPDATE results

1,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual UPDATE | 226.2 | 4,421 | 20.8x slower |

| Batch (:batch) | 192.0 | 5,208 | 17.7x slower |

| UNNEST list params | 12.2 | 81,756 | 1.1x slower |

| Arrow stream join | 11.6 | 85,900 | 1.1x slower |

| Temp table join | 10.9 | 91,933 | fastest |

| Table function | 11.0 | 90,759 | ~same |

5,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual UPDATE | 1,179.6 | 4,239 | 95.1x slower |

| Batch (:batch) | 1,052.6 | 4,750 | 84.8x slower |

| UNNEST list params | 17.0 | 294,131 | 1.4x slower |

| Arrow stream join | 15.2 | 329,765 | 1.2x slower |

| Temp table join | 12.4 | 402,927 | fastest |

| Table function | 12.6 | 396,662 | ~same |

10,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual UPDATE | 2,863.7 | 3,492 | 211.6x slower |

| Batch (:batch) | 2,298.9 | 4,350 | 169.9x slower |

| UNNEST list params | 24.0 | 417,146 | 1.8x slower |

| Arrow stream join | 16.7 | 597,372 | 1.2x slower |

| Temp table join | 13.5 | 739,041 | fastest |

| Table function | 15.1 | 660,487 | 1.1x slower |

50,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual UPDATE | 14,630.4 | 3,418 | 688.1x slower |

| Batch (:batch) | 13,578.4 | 3,682 | 638.6x slower |

| UNNEST list params | 119.9 | 417,188 | 5.6x slower |

| Arrow stream join | 69.0 | 724,504 | 3.2x slower |

| Temp table join | 21.3 | 2,351,675 | fastest |

| Table function | 24.6 | 2,033,870 | 1.2x slower |

100,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual UPDATE | 36,936.2 | 2,707 | 1199.7x slower |

| Batch (:batch) | 31,714.7 | 3,153 | 1030.1x slower |

| UNNEST list params | 232.0 | 431,070 | 7.5x slower |

| Arrow stream join | 138.0 | 724,798 | 4.5x slower |

| Temp table join | 30.8 | 3,248,036 | fastest |

| Table function | 36.9 | 2,707,682 | 1.2x slower |

500,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual UPDATE | - | - | skipped |

| Batch (:batch) | - | - | skipped |

| UNNEST list params | 1,223.8 | 408,575 | 7.6x slower |

| Arrow stream join | 669.7 | 746,591 | 4.2x slower |

| Temp table join | 160.9 | 3,106,897 | fastest |

| Table function | 198.9 | 2,513,792 | 1.2x slower |

1,000,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual UPDATE | - | - | skipped |

| Batch (:batch) | - | - | skipped |

| UNNEST list params | 2,340.3 | 427,299 | 9.1x slower |

| Arrow stream join | 1,321.6 | 756,654 | 5.1x slower |

| Temp table join | 257.3 | 3,887,168 | fastest |

| Table function | 330.8 | 3,023,035 | 1.3x slower |

DELETE results

1,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual DELETE | 199.4 | 5,016 | 20.1x slower |

| Batch (:batch) | 166.8 | 5,997 | 16.8x slower |

| UNNEST list params | 10.9 | 91,474 | 1.1x slower |

| Arrow stream join | 12.8 | 78,130 | 1.3x slower |

| Temp table join | 10.6 | 94,494 | 1.1x slower |

| Table function | 9.9 | 100,844 | fastest |

5,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual DELETE | 916.2 | 5,457 | 81.0x slower |

| Batch (:batch) | 808.0 | 6,188 | 71.5x slower |

| UNNEST list params | 15.6 | 320,613 | 1.4x slower |

| Arrow stream join | 14.5 | 343,747 | 1.3x slower |

| Temp table join | 11.8 | 423,315 | ~same |

| Table function | 11.3 | 442,306 | fastest |

10,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual DELETE | 1,820.0 | 5,495 | 142.6x slower |

| Batch (:batch) | 1,784.6 | 5,603 | 139.8x slower |

| UNNEST list params | 22.5 | 444,311 | 1.8x slower |

| Arrow stream join | 16.5 | 606,296 | 1.3x slower |

| Temp table join | 12.8 | 783,441 | fastest |

| Table function | 14.5 | 689,856 | 1.1x slower |

50,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual DELETE | 10,988.2 | 4,550 | 584.2x slower |

| Batch (:batch) | 8,356.6 | 5,983 | 444.3x slower |

| UNNEST list params | 94.6 | 528,407 | 5.0x slower |

| Arrow stream join | 64.5 | 774,737 | 3.4x slower |

| Temp table join | 18.8 | 2,658,112 | fastest |

| Table function | 25.1 | 1,993,260 | 1.3x slower |

100,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual DELETE | 23,546.4 | 4,247 | 836.7x slower |

| Batch (:batch) | 21,117.7 | 4,735 | 750.4x slower |

| UNNEST list params | 192.2 | 520,213 | 6.8x slower |

| Arrow stream join | 130.4 | 766,958 | 4.6x slower |

| Temp table join | 28.1 | 3,553,297 | fastest |

| Table function | 40.0 | 2,500,696 | 1.4x slower |

500,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual DELETE | - | - | skipped |

| Batch (:batch) | - | - | skipped |

| UNNEST list params | 956.8 | 522,595 | 9.4x slower |

| Arrow stream join | 642.3 | 778,404 | 6.3x slower |

| Temp table join | 101.6 | 4,922,569 | fastest |

| Table function | 148.8 | 3,359,951 | 1.5x slower |

1,000,000 rows

| Method | Time (ms) | Rows/sec | vs Best |

|---|---|---|---|

| Individual DELETE | - | - | skipped |

| Batch (:batch) | - | - | skipped |

| UNNEST list params | 1,891.2 | 528,756 | 10.3x slower |

| Arrow stream join | 1,248.1 | 801,192 | 6.8x slower |

| Temp table join | 184.2 | 5,429,384 | fastest |

| Table function | 302.2 | 3,308,981 | 1.6x slower |

System Information

| Java | 25 (Eclipse Adoptium) |

| OS | Linux 6.8.0-107-generic |

| Arch | amd64 |

| CPUs | 24 |

| Max Memory | 7,956 MB |

| DuckDB | v1.5.2 |

| Warmup Runs | 1 |

| Measurement Runs | 3 |

Appender: The Simple Default

Section titled “Appender: The Simple Default”The Appender is the right starting point for most Java applications. It’s simple, has no extra dependencies, and at 160K rows/sec it’s 30× faster than JDBC batch. For batches under 5K rows, it’s actually the fastest method.

Table Functions and Arrow: For Higher Throughput

Section titled “Table Functions and Arrow: For Higher Throughput”Above 10K rows, Table Functions (427K rows/sec at 1M) and Arrow (381K rows/sec) both deliver 2.5–2.7× the Appender’s throughput:

- Table Functions need no extra dependencies — just the DuckDB JDBC driver. You implement a

bind()/init()/apply()callback that writes columnar vectors. The key advantage: the entire operation runs as a single SQL statement, regardless of data size. DuckDB streams through the callback internally. This is why Table Functions overtake Arrow at large sizes (500K+). For UPDATE/DELETE they place second behind temp-table-join at 3.0–3.4M rows/sec. - Arrow requires Apache Arrow JARs and more code (schema definitions, vector building, stream registration, off-heap memory management). Arrow vectors must be fully built in memory before registering, so data is processed in chunks — each chunk is a separate SQL statement. At 1M rows with 10K-row chunks, that’s 100 statements vs Table Function’s 1. Arrow wins at medium sizes (10K–100K) but falls behind Table Functions at 1M. It’s the right choice when your data is already columnar or when you need interop with other Arrow-based systems.

Temp Table + Appender: For Bulk Mutations

Section titled “Temp Table + Appender: For Bulk Mutations”The temp-table-join pattern delivers 5.8M deletes/sec and 3.8M updates/sec at 1M rows — over 1,000× faster than JDBC batch. Instead of executing 1M individual statements, DuckDB executes a single set-based join. UNNEST (the other set-based approach) is 9–11× slower at 1M.

JDBC Batch: For Small Operations

Section titled “JDBC Batch: For Small Operations”On DuckDB, addBatch() / executeBatch() provides almost no speedup over individual statements (~5K vs ~3.3K rows/sec). DuckDB doesn’t rewrite batched statements the way PostgreSQL does with reWriteBatchedInserts. JDBC Batch is still the simplest option and works across both DuckDB and PostgreSQL, making it useful for portable code or small batches.

Methodology

Section titled “Methodology”- DuckDB JDBC (in-process, on-disk database)

- Java 25 (Temurin)

- Apache Arrow 19.0.0

- Each method: warmup + measurement runs, median reported

- Data verified after every operation (row count + value checks)

- All methods use a single connection

- All methods accept

Iterable(notList) — no method assumes the full dataset fits in memory - Methods that need batching (Arrow, UNNEST, Multi-value, JDBC Batch) chunk at 10,000 rows internally

- The Table Function streams naturally via its

Iterator-based callback at 2,048 rows per chunk

Try It Yourself

Section titled “Try It Yourself”git clone https://github.com/sqg-dev/sqgcd sqg/examples/java-duckdb-benchmarkjust run # normal (skips slow methods at large sizes)just run --all # include Individual/Batch at every sizeHow We Built This

Section titled “How We Built This”The benchmark uses SQG, a type-safe SQL code generator. You write annotated .sql files with your queries, and SQG introspects them against a real database at build time to generate type-safe access code for Java, TypeScript, or Python. No ORM, no runtime reflection — just plain SQL in, type-safe code out.

For example, this annotation:

-- TABLE sensor_readings :appendergenerates a complete type-safe SensorReadingsAppender class with append(UUID deviceId, OffsetDateTime timestamp, ...) — column types inferred directly from DuckDB.

From the single queries.sql file, SQG generated most of the code used in this benchmark:

- The Appender for

sensor_readings(fromTABLE sensor_readings :appender) - Batch INSERT/UPDATE/DELETE methods (from

:batchannotations) - UNNEST bulk delete/update methods (

delete_readings_unnest) - The temp-table staging pattern:

CREATEEXECs, staging Appenders (TABLE _delete_keys :appender), and set-based join EXECs - Individual row methods and verification queries

The Arrow and Table Function methods are the only hand-written ones — everything else is generated from SQL.

SQG can currently generate DuckDB appender code and also produce Arrow code for reading results (SELECT). We are planning to add

more support for generating code for UDFs and methods which use Arrow as input.

Questions or feedback? Join the discussion on GitHub.